Where raw telemetry meets rigorous verification.

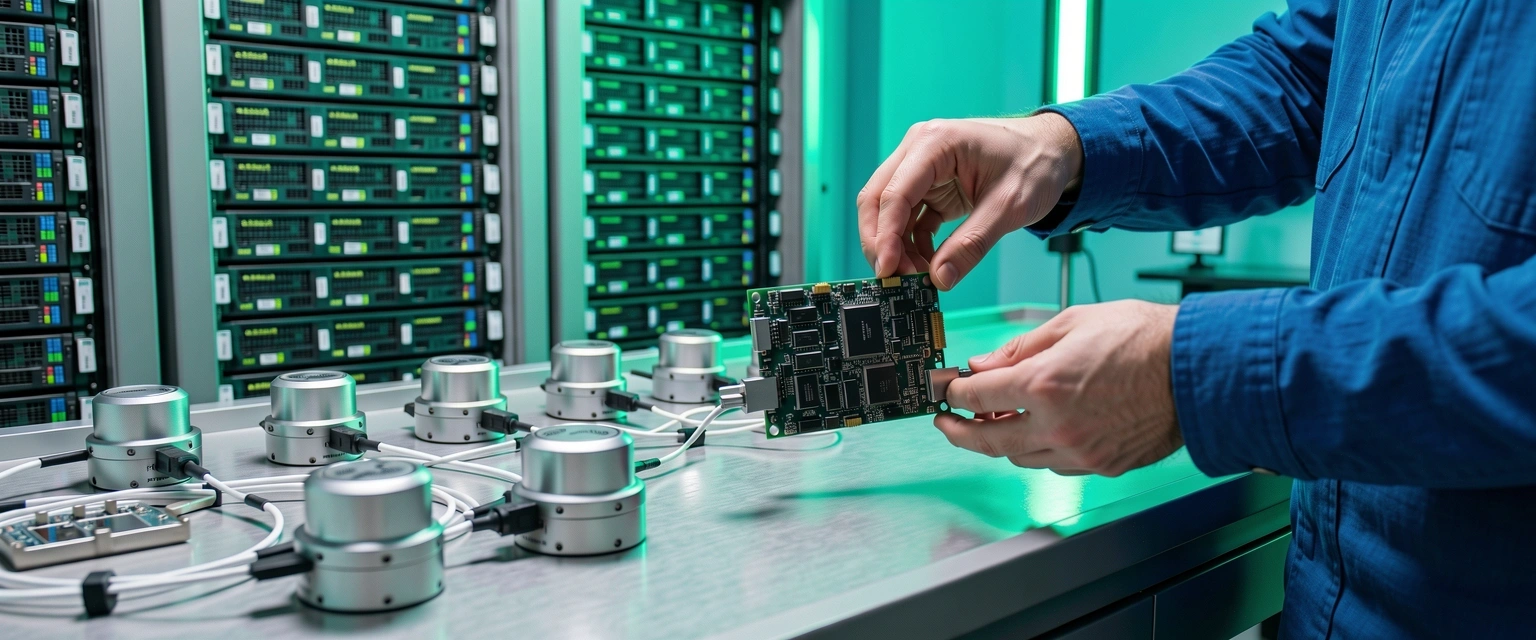

The Intelligence Lab is our internal engine for validation. We do not just process data; we interrogate it. In an era of automated noise, our Sydney-based team applies a high-signal filter to edge intelligence to ensure every insight is operationally sound.

The Signal Protocol

Our methodology is built on the reality that data at the edge is often fragmented, intermittent, and prone to environmental interference. Pacific Edge Intelligence utilizes a proprietary four-stage verification cycle to transform these variables into certainties.

"Analytics without context is just overhead. We build models that understand the physical constraints of the Australian enterprise landscape."

— Lead Research Architect

Distillation at Source

We deploy custom edge intelligence kernels directly onto hardware. By processing at the source, we eliminate the latency of back-hauling junk data. This stage focuses on noise cancellation and anomaly detection before a single byte leaves the local network.

Contextual Cross-Reference

Data from one sensor is a data point; data correlated across multiple vectors is intelligence. Our Sydney lab simulates complex environments—from mining sites to high-density logistics hubs—to ensure our analytics engines recognize environmental variables that affect accuracy.

Integrity Auditing

Before a model is finalized, it undergoes a stress test against historical high-variance datasets. This editorial standard ensures that the outcomes predicted by our systems remain reliable even when the hardware environment degrades or network conditions fluctuate.

Operational Deployment

The final intelligence solution is integrated into the client's existing workflow. We don't believe in parallel silos. Our engineers ensure that the high-signal output flows directly into decision-making centers, whether that's an automated control system or an executive dashboard.

The Sydney Proving Ground

Our facility is equipped with dedicated hardware loops for replicating Australian industrial conditions, ensuring analytics perform in the field as they do in the lab.

Editorial Standards for Data

We treat data processing with the same scrutiny as high-stakes journalism. Every report and automated signal is vetted against these three core pillars of intelligence.

Verifiability

No insight is delivered if the path from raw data to conclusion cannot be traced. We shun "black box" algorithms in favor of explainable edge intelligence.

Perishability

Data at the edge has a half-life. Our models prioritize real-time over historical relevance where immediate operational action is required.

Precision

We define success by the reduction of false positives. High-signal intelligence means only alerting you when the threshold for action is actually met.

Development Specs

Our lab utilizes a diverse stack to ensure interoperability across legacy Australian infrastructure and next-generation edge hardware.

Native Edge Deployment

C++, Rust, and Python kernels optimized for ARM and x86 architectures.

Low-Power Wide-Area Networks

Optimized transmission protocols for LoRaWAN and Satellite-linked remote sites.

Stream Analytics

In-memory processing for millisecond-latency decision engines.

Ready to audit your data strategy?

If your current analytics provide more noise than signal, it is time to shift your intelligence to the edge. Our consultants are available to review your current infrastructure and provide a custom Lab report.

+61 2 3000 0202

info@pacificedgeintel.digital

Sydney 2